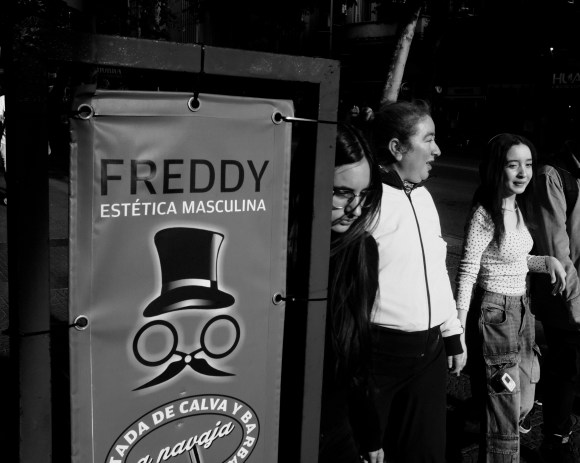

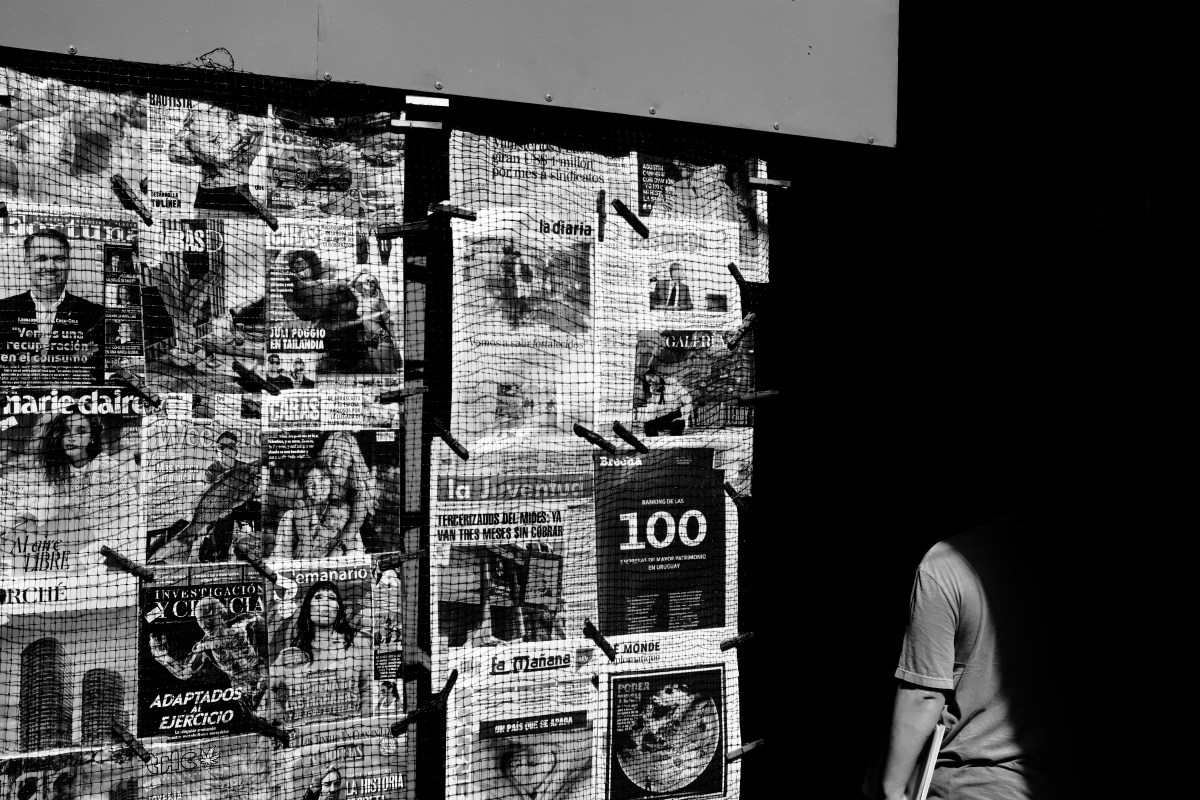

Snippets reflect the transitory experiences we have when passing through scenes, places, and times with people that are fragmentary and atomistic pieces of a greater whole.

My Parisian snippets evoke elements of my own visual grammar, but if they represent anything, it is an attempt to recover from a form of self-alienation that developed over the latter part of 2025.

While a shared grammar connects these images, they resist coherence beyond the internal logic of that language. They do not advance a unified narrative or claim to describe Paris as such. The photographs were not made according to a plan or strategy, nor in service of a particular story.

Instead, each image emerged from extended photographic walkabouts, where movement itself was the method, and each step was taken in the hope of returning to myself. On returning from Paris, I could at least see myself again, even if I was still not yet fully walking in my own shoes.

All images made using a Leica Q2 or an iPhone 17 Pro, and lightly edited using Darkroom and/or Apple Photos. An extended version of this series was first published on Glass.

About Me

Christopher Parsons an amateur Toronto-based documentary and street photographer, and has been making images for over a decade. His monochromatic photographs focus on little moments that happen on the streets and which record the ebb and flow of urban life over the course of years and decades.

His work often tries to capture the urban landscape from unfamiliar perspectives and routinely focuses on attention to small and transient details of his environments. Rather than pursuing individuals on the streets he focuses on the backdrops of the urban environment and how subjects live their lives passing through them.

His work has been highlighted on Ted Forbes’ YouTube Channel, The Art of Photography, on the Photowalk Show podcast hosted by Neale James, as well by the Glass social media platform.

Outside of photography, Christopher has worked on high-profile national and international privacy, data security, and national security issues. He is currently employed at a government data privacy and transparency regulator.